Nebulaworks Insight Content Card Background - Denys nevozhai cityscape interchange

Recent Updates

Nebulaworks has been at the epicenter of containers, including container platforms, for almost four years. Let me provide some context. Our first orchestration platform built using multiple OSS projects went into production long before K8s was v1.0. In fact, at the time nobody had coined “CaaS.” Docker didn’t have a commercial offering, and the docker engine hadn’t been decomposed into the bits that comprise the engine today (runC, containerd). If we were helping you with an AWS-first strategy at the time, our recommendation would have been to use Beanstalk to run your containers. You can say we’ve seen it all, deployed every platform in production, both on-premises and in the public clouds.

And in this time we’ve found the following statements to be overwhelmingly true, regardless of the end user, where an orchestration platform is going to be deployed, or the company looking to deploy the tools:

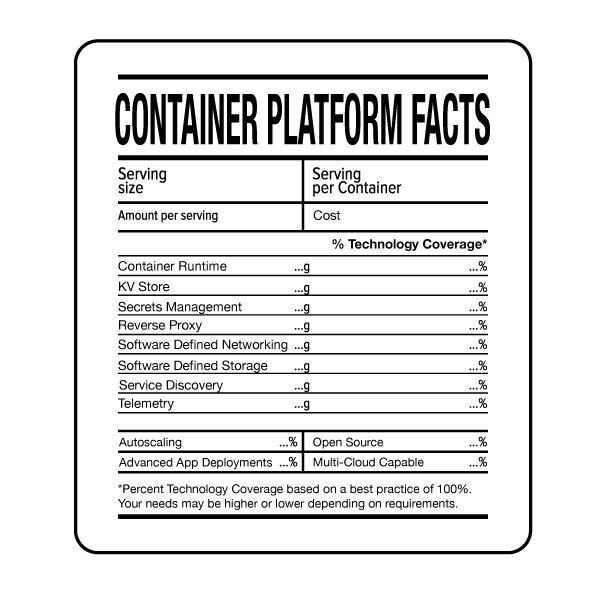

- All of the orchestration platforms are complex engineered systems. Look under the cover of what is needed in a platform, like Kubernetes. You’ve got SDN, a distributed kv store, layers of reverse proxies, telemetry collection, DNS services, etc. You’ll need to at least understand the architecture and how this all works together!

- They are all self-contained, meaning, it is tough to extract runtime metadata that is created and using this to connect to external services that are outside of the orchestration platform and manage the consumption of these providers through policy-driven approaches.

- No platform has all the answers. No matter if you choose PaaS (deploying your apps as containers) or CaaS, they are opinionated systems, functionally extended largely by the edge cases the OSS contributors are facing. Take a gander at the open and closed issues, PRs and discussions for any of the supporting OSS projects used in the platforms. You’ll learn a lot about why certain decisions were made.

So where does that leave you? If you are just jumping into the container game, you are best served to evaluate the pre-assembled platforms against the value of building your own platform in terms of:

- Having the appropriate skills and managing the initial build

- Keeping up-to-date with changes and enhancements to the platform (i.e., mitigating further accumulation of technical debt

- Providing something to the business an existing open source project can’t, and, at what cost

- How the platforms integrate with a modern application delivery pipeline

From there, if you choose to roll your own container platform or are thinking of open source first, a sound pattern is emerging. Decompose the functionality these platforms are providing (connectivity, security, and runtime) and choose best of breed that can help enable multi-cloud. For example, you can choose docker, consul, vault, HAproxy, and nomad and build a very functional platform to run containers and non-container processes, with security that is far better than secrets distribution, and is open to brokering in/out of the platform (i.e., extending into existing IT infra/services).

Either way, you should look to experts to help with the choosing, implementation, and integration of these distributed systems. They’re not simple tools with point and click deployments. Even the “easy buttons” require you to make choices, and out of the box, they do not deliver often desired functionality like auto-scaling. And, taking theses from test cases to full production-grade deployments requires additional services and capabilities that, you guessed it, are not included in the container platforms.

Looking for a partner with engineering prowess? We got you.

Learn how we've helped companies like yours.